-

§ Happy Thanksgiving

§ What would a synthetic cairn look like? Can we take anything from the elegant idea of stacking stones as a place-marker and apply it to collaborative sculpture? Build together with everyone who crosses through a specific site over time. Inevitably someone will dismantle it all but that can be just as poignant.

§ I wrote up a list of exhibit design challenges for an upcoming collaboration with a student group.

Create…

- an exhibit that requires cooperation between multiple people

- an exhibit that is meaningfully different each day

- an exhibit that cleverly communicates the passage of seconds

- an exhibit that cleverly communicates the passage of years

- an exhibit that rewards careful observation

- an exhibit that can be enjoyed equally by both blind and sighted guests

- an exhibit that is site-specific

§ New appliance alert! While 2023 is nearly over, we couldn’t let “the year of new appliances” go by without replacing one more faulty appliance.

First it was our washing machine, shortly after, our refrigerator stopped working, a few months later it was our oven and now, finally, we are replacing our shoddy dishwasher. Delivery is December 6th so I can’t say much more until then but I’m frankly pretty excited.

Let’s all hope for a better theme for 2024.

§ With my focus on the kitchen, I decided to take care of something else I had been interested in for a while—I replaced my faucet and added an under-the-sink water filter unit. Even though it required a fair deal of awkwardly craning my body and sticking my head underneath my kitchen cabinets it was all a much easier job than I had feared.

§ I started watching Brit Marling’s new miniseries A Murder at the End of the World. You might remember her as the creator and star of the captivating and brilliantly weird show The OA a few years ago. The new series is ongoing with only three episodes out at the moment but so far, so good.

I’m also, of course, now re-watching The OA. It is such a special show. Completely weird but unfailingly committed and self-assured. A Murder at the End of the World doesn’t yet have quite that same spark but I have full faith that Brit Marling and Zal Batmanglij can deliver something special.

§ I ordered Teenage Engineering’s new EP–133 K.O. II sampler. It looks like a very beefed up version of one of their Pocket Operators, a series of devices I absolutely adore. I can’t wait to try it.

§ Checking out the album-centric music app Longplay reintroduced me to an old favorite album from a few years ago.

The album Woodkid For Nicolas Ghesquière—which was produced for a wild 2019 Louis Vuitton fashion show—is pretty darn close to a perfect record. I would love to find more music like it but I can’t quite articulate its genre cogently enough to search for similar artists. It is big, orchestral, and percussive. It has sparse but compelling vocals. It is overwhelmingly analog but it is not afraid to use digital manipulation where it is effective. Even Woodkid’s other music isn’t quite comparable. Tyondai Braxton’s Central Market feels like a sibling but other than that I am coming up blank.

§ Sam is back and I have a new least favorite word: ouster. I never want to hear the word “ouster" again.

§ Links

-

§ Had a big meeting to kick off a two year collaboration with the Alliance of Immersives in Museums. Got to meet a bunch of techy creative people from interactive science museums around the country.

A big topic was how to balance the flexibility and speed of pure immersive expirerencs with the unique possibilities that can only arise from traditional tactile exhibits.

§ How can desire paths be used in interior design? Do you keep the space reasonably empty and then add affordances—benches, walls, signage, trash cans—only after a period of careful observation? Do you make a reasonable initial guess and then remove and rearrange later? Is there some kind of carpeting that can reveal the paths tread later, perhaps under some special light?

§ I bought the new 2D platformer Super Mario Bros. Wonder, hoping to capture some of the magic from the surprisingly excellent Donkey Kong Country: Tropical Freeze.

- The local co-op play is great. Had I not been spoiled by Tropical Freeze’s ingenious backpack mechanic I would be completely happy.

- Doing away with time limits was the right call. Now it is time to rethink the coin / life model. Celeste has had the right idea here all along: unlimited time, unlimited lives, the challenge comes from clever level design.

- In general it has been a really satisfying bite-sized game. Each level takes maybe five minutes or less so it’s easy to pick it up for a quick round during a moment of downtime.

§ I’m trying out Arc, a new-ish web browser that feels more like an operating system for the internet. It is weird and slightly fascinating.

A giant downside: their mobile app is awful. You can only open one tab at a time in the most unintuitive way imaginable. It is the opposite of the old Apple design adage of “it just works”. Although apparently a new, fully-featured mobile app is under development. Until that arrives I’ll probably stick with Safari for most of my browsing but I am grateful Arc is here an unafraid to throw away all assumptions on what a web browser can be.

§ I was hopeful OpenAI would decrease the price of their ChatGPT Plus subscription in the near future. $20 per month is a truly wild price. It is my most expensive subscription save for my cellular data plan. It says a lot that it is even remotely worth it at that price. Well this week OpenAI decided to pause new signups due to a “surge in usage” so the possibility for a price decrease any time soon feels pretty remote.

§ But also! The OpenAI board unexpectedly fired their CEO and co-founder Sam Altman on Friday. In response, their other co-founder Greg Brockman and at least three other senior researchers quit. So who knows! Anything can happen at this point!

§ I got a new Creality CR-M4 for work. I can’t attest to the quality yet—I’ve only had time for a couple of small test prints—what I can say is it is giant. Effectively an 18x18x18" build volume.

§ Links

- Unused three letter acronyms — there are a lot of them

- A blinking LED to assist with maintaining focus?

- Watt lies beneath

-

§ Some seriously smoky steak searing prompted me to check on my smoke detector. It turns out it doesn’t work and probably hasn’t for years.

I ordered a new one. Thanks smoky searing!

§ In other food news, I had three—count ‘em three—different soups for dinner this week. Chili verde, chili con carne, and kapusniak. Is this a sign that autumn has truly arrived?

§ I am beginning to realize that I enjoy creating objects, structures, and opportunities that facilitate self-directed learning more than I enjoy traditional teaching (“direct instruction”). This is all sort of a vague abstract notion at the moment but it was an interesting realization nonetheless.

On a very related note, the jungle gym turned 100 this year. I would love to design a playground sometime.

§ I’ve once again subscribed to a month of ChatGPT Plus. Initially, I was primarily excited to to try their new voice feature. It is indeed impressive. I might try to make use of their recently announced text-to-speech API for article narration.

The new image uploading and analysis feature is even more incredible. It can act as a completely passable art critic.

Finally, the custom GPTs feature may have provided the most tangible, real world LLM use-case to date. I uploaded my health insurance policy’s 100 page information booklet to the GPT’s backend knowledge-base, started up a new chat session, and then was able to ask the custom GPT to explain the charges on an unexpected medical bill I recently received. Is it dumb from a privacy standpoint to upload all of this medical information to ChatGPT? Unequivocally yes, but the utility is unmatched.

§ The way Matt Webb describes his approach to learning and making really resonates with me.

For the last decade or so, the way to bring new products into the world was to think carefully and make PowerPoint decks and cover the walls in post-its. No longer. The landscape of possibilities is unknown so the appropriate approach is to roll your sleeves up. Things-which-are-made teach you about the technology, open up new thoughts, and (vitally!) let you work with people who aren’t as close to the technology as you but probably have better ideas.

I’ve always found that the best ideas arrive when a group of people come together around a physical prototype. Abstract brainstorming sessions all too often breed passionate opinionated disagreements while prototypes inspire solutions.

§ It is a weird new feeling, being proud of my state like this. I have to hand it to Ohio though because time and time and time again recently the state defied my pessimistic expectations in the polls. Great job!

§ I know last week I said something silly like the daylight savings time change will encourage me to “embrace the night”. In actuality I’ve been going to sleep earlier than ever but waking up early enough to watch the sunrise. It has been nice.

§ Links

-

§ I spent Tuesday evening pulling up the remainder of my vegetables in advance of an overnight freeze.

The tomatillos were the biggest surprise. The matured late so I was left with a harvest of just under four pounds—a fraction of what have been getting the past few years. Over the weekend I used those, a basketful of poblanos, and some Hungarian peppers to make chili verde. A delicious autumn meal.

Although the San Marzano gave me a lot of blossom end rot trouble early in the season, it ultimately became one of my new favorite plants. I’ll certainly be growing more next year.

I’m hopeful for a longer, more plentiful growing season next year.

§ I planted six different varieties of garlic for a spring harvest. I can’t wait to see which one fares best.

§ We had the first snowfall of the year overnight Tuesday—very early! October 31st, for future reference.

§ Leaf blowing takes more skill than I appreciated. Raking is almost certainly more efficient at this point. The best I can manage with the leaf blower is to transport the leaves to other, equally inconvenient, areas of my yard.

§ During some of the warmer evenings this week I’ve been working on winterizing the backyard greenhouse. Our plan is to keep our quails there for the winter instead of moving them into the connected garage like last year. The best practice, as far as I can tell, is to use bubblewrap as a extra air barrier. I am looking into different options to provide active heating too.

§ As I write this, daylight saving time will have just started (or ended? I can never remember) which means, in practice, it will start getting dark at like 5:30 rather than 6:30. I used to get pretty upset about this but the truth is that there are just fewer hours of daylight at this time of year and there is no great solution to that other than moving somewhere more equatorial.

This year, I will embrace the night.

§ My big exhibit debut was a huge success. It has been an wild few months and this past week was a particularly big push to the finish line. Seeing it all come together I am, in one sense, exhausted. More than that, though, I am ready to do it again.

-

§ It is mayfly season along Lake Erie. Be mindful when opening your mouth outdoors lest you might inadvertently ingest on aquatic insect or two.

§ Wes Anderson’s new short films are—as with his other work—inspiring to me in a way unmatched by most other media. There is a specific daring and playful quality to them that I find deeply motivating. It is almost as if he has either never seen another movie or perhaps he has seen all the movies but doesn’t feel beholden to any of them.

§ I’ve been trying to make drawing an everyday practice. It is, at least, an activity that occupies my hands without involving any technology. More than that, though, I’ve noticed that it helps me generate ideas more reliably than any other activity. The act of simply drawing shapes, connecting lines, and allowing my mind to free-associate has been more productive than I would have ever guessed.

§ I visited our local nature reserve for the first time since April. April! Unsurprisingly, I couldn’t find the grape vines I had stashed back then.

§ I managed to get access to Puzzmo, a new platform for puzzle games by Zach Gage of Really Bad Chess fame. It is such an unabashed celebration of play. It is shaping up to be my favorite internet discovery of the year.

§ An unabridged list of books I’ve started—but not finished—so far this year:

- The Dawn of Everything

- Strong Towns

- The Precipice

- What We Owe the Future

- Human Compatible

- Exhalation

- The Secret of Our Success

- Red Plenty

- Unflattening

- Chokepoint Capitalism

- The New House

That said, I’ve started reading Brian Merchant’s Blood in the Machine, a history of the Luddite movement. The writing is strong enough to take a subject I was slightly interested in and make it gripping.

§ Next Friday is the public opening of a huge interactive engineering exhibit at work. Over the past three months I, working with a team of two others, transformed a dim grey 10,000 square foot exhibit hall into a colorful celebration of architecture, construction, creativity—building.

Together we designed and built a dozen unique activity tables, nine enormous Lego block inspired pedestals, a six foot long LED-backed building surface, a robotics interactive field, a time-challenge game, and a giant car racing ramp alongside countless smaller elements.

The star of the show is a 50 square foot timber frame house structure with modular wall panels so that the public can “build a house together.”

The past few months have been a whirlwind and I’m sure this next week will be even crazier as we push to complete all of the finishing touches. I can’t wait.

§ Links

- David Pierce interviewed Corey Doctorow, Manton Reece, and Matt Mullenweg about POSSE/PESOS for Monday’s Vergecast episode. Need I say more?

- Hannah Diamond’s new album Perfect Picture is what you would get if E•MO•TION was written and performed by a cyborg. Highly recommended.

- Brick and mortar SEO – a New Yorker decided to name their new restaurant “Thai Food Near Me.” Was it a smart idea?

- Mini-FM - “I’m never going to be bored-browsing Netflix and get a faint glimmer of someone’s home-broadcast TV show wedged between menu items, there to view if I can tune in exactly right. My iPhone might be the means of production, in the right hands, but I’ll never own the means of distribution.”

-

§ I pickled a couple jars of cascabella peppers from the garden. I’m pretty sure I did everything correctly but I’m not yet confident enough in my canning skills to store them outside of the refrigerator.

§ Since starting this job, which regularly requires me to work between four different floors, my daily “flights climbed” Apple Health metric has been averaging right around 38. This is up from 15 when I was living in the flatlands of Chicago and 5 during the height of the pandemic.

§ I was gifted a 65 inch television to replace my previous 50 inch panel. There is something obscene about the truth revealed by a screen of this size. Yes, the television is often the main focus in my living room but I don’t want to be constantly be reminded of that fact. I want to live a life where the TV plays an ancillary role in my leisure-time activities. A 65 inch screen doesn’t bring me any closer to achieving that.

After a few days I put my old small screen back and moved the 65 inch panel to a closet. Anyone need a big monstrosity of a TV?

§ It is shocking to me how poorly designed some of Amazon’s products are. The Kindle app on iOS, in particular, is indisputably the worst user interface I’ve seen in quite a while.

I really wanted to like it. “Whispersync for Voice”—their feature that syncs reading progress between ebook and audiobook copies of the same title—sounds incredible. The idea that I could listen to a audiobook while driving and then pick right where I left off later in the ebook version is deeply compelling to me. So compelling, in fact, that I downloaded the Kindle and Audible apps and signed up for a trial of their “Kindle Unlimited” service. The last time I was so confused while navigating an interface was when I tried AWS a few years back.

Apple has all of the pieces in place to add a similar ebook/audiobook sync feature to their Books app. I hope one of these days they finally get around to it.

§ A major reorganization of my bathroom closet has made an inconvenient fact unavoidable: I own at least two dozen towels.

As with cups and mugs, the correct number of towels to own, per capita, is greater than one but certainly less than twelve.

§ I decided not to review my subscription to Glass—the hip new social photo sharing site. It is a beautifully designed service but ultimately not something I have a need for in my life at the moment. After doing so, I realized that without an active subscription I can’t see or export my own photos.

Relying on my own website is clearly a much better decision for the long run. I tweaked a few elements of my photos page to borrow some of my favorite design ideas from Glass.

§ By Monday morning it had become apparent that I had caught a cold. It has been less than two months since my last one—not great! Thankfully it was short-lived. By the time Friday rolled around my synonyms had all but cleared up, save for some lingering congestion. Perfect timing on the phenylephrine news.

§ Links

- One day I will keep goats and a guard llama to protect them

- Question Mark, Ohio is a huge ongoing work of multimedia fiction. Think internet-scale Welcome to Night Vale.

- A catalog of Star Trek chairs that are commercially available

- Joe Rosensteel on streaming services and the Hollywood strikes

- Matt Webb on Big’s Backyard Ultra

-

§ I am frankly pumped about this sweater weather. Caroline and I spent a few brisk evenings decorating the yard for Halloween.

§ I’ve learned more about the inner-workings of my kitchen sink than I would prefer to. On Monday it decided to effectively stop draining. I didn’t quite realize how important my kitchen sink was until a quick rinse of the dishes from lunch resulted in six inches of standing water for the next several hours.

It was worse after I realized that a sizable fraction of the water that eventually drained wound up pooled in the cabinets under the sink. There was a leak.

After a bit of a panic running around with armfuls of sopping shopping bags and searching the garage for a suitable bucket, I was able to take a trip to the hardware store to buy a replacement for the blown gasket connecting two of the PVC drain pipes.

Replacing the rubber gasket fixed the leak but, if anything, it only exasperated the slow draining problem. No longer allowed to drip and pool underneath my cabinets, the water had no recourse but to sit stagnant, occasionally alternating between the left and right basin.

I purchased an adorable miniature plunger that would be dedicated for this task. Out of all career options available to plungers, this job has got to be one of the most highly coveted. Unfortunately, our new friend proved ineffective. I made a home for him under the sink nestled between my collection of spray cleaners and sponges.

This is when I realized that I needed to do the task that I had been trying to avoid up until this point: disconnect all of the pipes leading to the wall and snake each section. I wish I could tell you that I discovered some disgusting obvious culprit, dear reader, but I didn’t. I cleared out a few small bits of gunk but, nevertheless, after I put everything back together the sink started draining normally. An overall unsatisfying conclusion, I realize. I am just glad I can clean my dishes again.

§ Is there a name for the practice of saving leftovers in a ziplock bag instead of a tupperware because it will be easier to inevitably throw it out, uneaten?

§ I found a new podcast that is super charming—Hemispheric Views. Think ATP but if two thirds of the hosts were Australian. Sold yet? Of course you are.

§ I started my third—or maybe fourth—rewatch of Twin Peaks. It has consistently endured as my favorite television show with everything else—The Wire, Utopia, Station Eleven—all jockeying for, at best, second place.

§ I’ve added a “list of lists” page to this site. Included is a list of Twin Peaks lines that I find myself quoting multiple times a year.

§ My big un-curated hyperpop playlist now contains twelve hours of music. It is, however, still only my second longest playlist. It trails quite a distance behind my nearly 24 hour long African blues, funk, and disco playlist.

§ I can’t wait for Corey Doctorow’s upcoming book The Lost Cause. The two hour(!) audiobook excerpt hooked me. It doesn’t hurt that I enjoyed his previous book Red Team Blues.

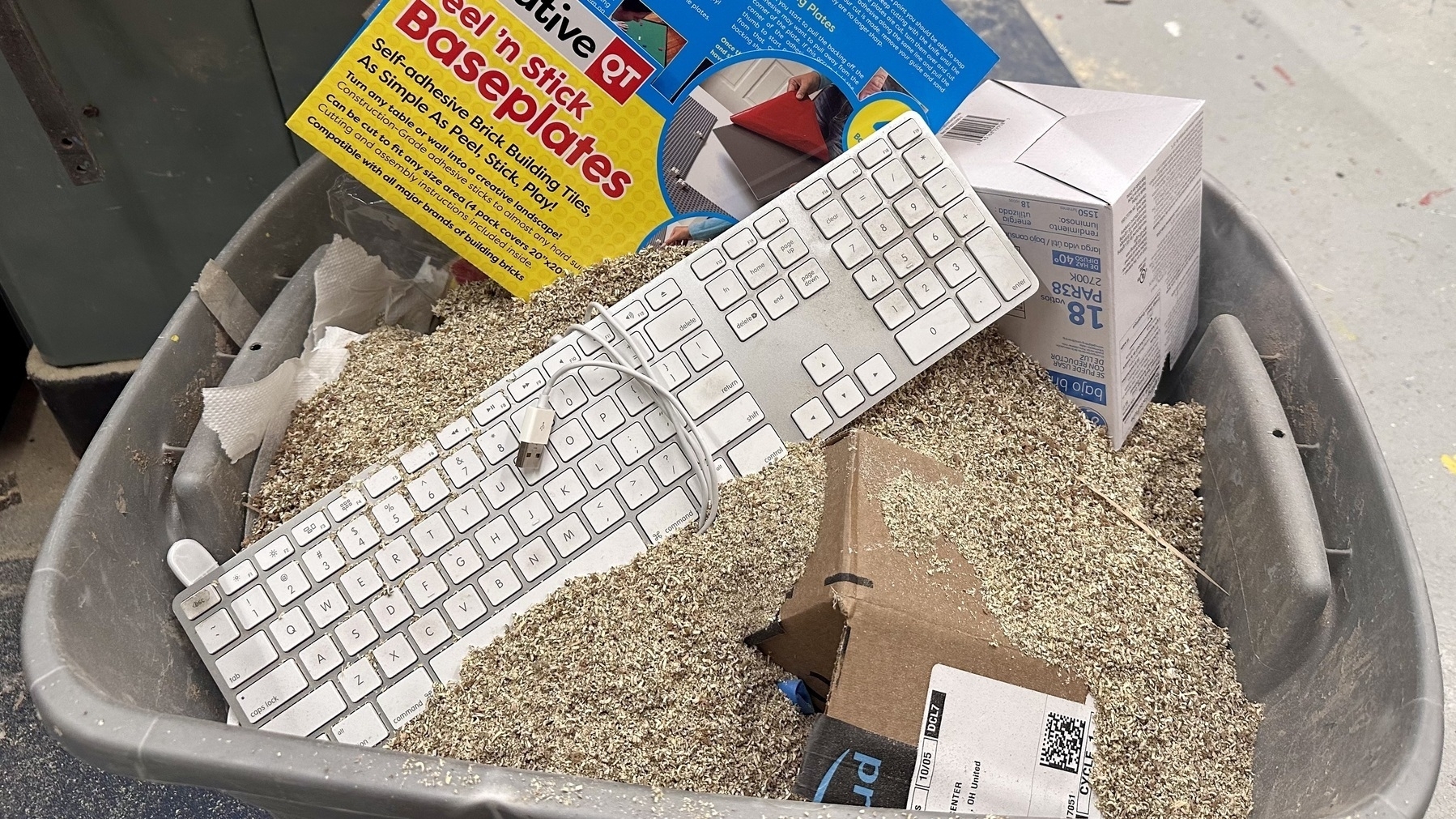

§ The bottom third of the cheapo VGA monitor connected to my CNC’s computer started flaking out after only a week or so. I can’t blame it since it is inundated with massive quantities of saw dust six hours a day. On Wednesday, we had our first true technological casualty though: an old Apple extended keyboard. Will the random Dell model that replaced it fare any better? Stay tuned.

§ Links

- Fake it ’til you fake it - A long and thoughtful piece by Nick Heer on computational photography and the extensive history of image manipulation

- A waterhole in the Namib desert

§ Recipes

- “Marry me” chicken - I guess it is a TikTok thing. I have to admit it was delicious.

-

It is an easy idea to mock: “Netflix is trying to become Blockbuster thirteen years after killing it”, etc. I think this could be a smart move though, even if these locations turn out to be not much more than upscale, well-run theaters.

Americans, on average, visit movie theatres once a year. Alamo Drafthouse, with only fifteen locations outside of Texas, has built an outsized reputation by delivering a superior moviegoing experience compared to traditional theater chains. I don’t think it is a stretch to imagine Netflix achieving a similar status. Their goals are larger than simply building a theater though.

Andrew Liszewski, The Messenger:

Netflix plans to open retail destinations where fans of the company’s most popular streaming series can buy merchandise, dine on themed food and even partake in unique experiences, like a Squid Game obstacle course or a visit to the Upside-Down […]

Slated to open sometime in 2025, the new venues will fall under the name “Netflix House” and will be the company’s first permanent locations […]

“Rotating installations,” a mix of both casual and high-end food offerings and even “ticketed shows” will encourage fans to return to the venues frequently.

Netflix’s prospects were looking grim for a while but I am increasingly convinced that if there is eventually only going to be one streamer standing, it will be them.

-

§ My tomatillos plants are finally, for the first time this year, beginning to grow fruit. In past years, I would be drowning in my favorite husked nightshade by now. There has been something distinctly different about this year’s growing season. My pet theory is that it has something to do with all of the smoke from the Canadian wildfires earlier in the summer.

§ I was in an all-staff meeting during FEMA’s emergency alert test on Wednesday. My phone received the alert a full two minutes after everyone else. No idea why. I do know that I was not the only person with an iOS 17 iPhone connected to the Verizon network so I don’t think it was an operating system or carrier thing.

§ Almost nonstop CNC milling this week taught me an important lesson: a large number of inexpensive bits is more useful than fewer fancy bits. This really sunk in after dulling a $100 compression bit in two days. I put in an order for eighteen cheapo bits to replace it.

§ Alright I finally did it, I traded in my long-neglected iPad and got a pair of the new AirPod Pros.

The first thing I noticed is that they connect to my phone immediately, every time. Above everything else, this is the largest quality-of-life improvement overall. My previous pair of AirPods had been getting less and less reliable in this regard over time. It would sometimes take minutes of fiddling before they would finally connect. There is none of that here.

Now, the sound quality overall? Eh… maybe I could tell the first and second generation Pros apart in a blind test. Maybe. If anything, songs that feature a particularly deep bass might sound a bit richer. Overall, there is absolutely not a huge difference here though which I will admit is a bit disappointing.

The new integrated volume control is very awkward to use but I’m glad to have it. Maybe I just need more practice.

Over the years, I’ve found myself typically using AirPods in one of two ways:

- Wearing a single AirPod with Transparency mode enabled so that I can maintain as much awareness of the world around me as possible.

- Wearing both AirPods with Noise Cancellation mode enabled to maximally block out my surroundings.

On these new AirPods, Transparency mode is noticeably improved. There are also a few new features that were totally absent on the originals…

Adaptive Audio is bizarre. My neighbor using his leaf blower sounds exactly the same as it does when Transparency mode is enabled. When running my CNC machine, on the other hand, Adaptive Audio blocks more sound than Noise Cancellation mode does. Other times, it is as if it can’t decide what to do. Adaptive Audio attempts some kind of disorienting half noise cancellation thing whenever I am washing dishes. Overall, I probably prefer it to Transparency mode although I hope future software updates significantly improve it. It is still in need of lots of tuning.

Conversational Awareness: I wasn’t expecting to like this but I’ve been pleasantly surprised. It is smart enough not to activate for quick exchanges—think: a passing “excuse me” in the grocery store. The moments where it does fade down audio makes the AirPods feel prescient. I would start reaching towards my ear to manually pause when I would notice that the audio had already automatically stopped a moment prior.

Personalized Volume: I am not entirely sure what this is doing. I only notice it when it randomly makes my audio way too quiet. Maybe it is doing great work behind the scenes 90% of the time but I don’t think it is worth it when it feels broken the other 10% of the time. I turned this setting off.

So, are the AirPods Pro 2 as much of an upgrade over the AirPods Pro 1 as the AirPods Pro 1 were over the original AirPods?

Going from the original AirPods to the first generation Pros was revelatory. As much as I adored my original AirPods, as soon as I got the Pros they instantly felt like garbage.

Upgrading from the first generation Pros to the second? If I accidentally left the house with my old pair of Pros I would be annoyed but if I were more than a block or two away I wouldn’t turn around to swap them out.

§ Station Eleven is a really well made television show. It is somehow better than I remember it being on my first watch. The seventh episode could stand on its own as an unassailable masterpiece of a short film.

§ Tangentially related: It occurred to me that fewer things have ultimately changed as the result of our recent global pandemic than I would have anticipated. Of course a number of things have changed and it is probably still be too early to see all of the eventual consequences but, sitting here today, sweeping societal effects feel relatively few and far between.

§ As we approach the end of the year, I’ve been trying to track down a replacement for a particular planner my fiancé prefers. It turns out the company that makes her beloved 2023 planner is named Bluesky. So, not only do they make pretty good planners, they also must be the reason the invite-only social media upstart Bluesky (“bsky.app”) has such an awful domain name.

§ Links

- How to see beauty, a beautiful multimedia blog post by Ralph Ammer

- Happy birthday to my firstborn baby boy - “One thing that prevented us from receiving more casual help is that so many people are so anxious about holding a baby, in a way that seems related to their confusion about their own bodies. My baby did not need to constantly be held as carefully as one might hold a precarious stack of cut-glass doves, but could without difficulty be transferred from one person or place to another by grabbing him under the armpits like the small monkey he is.“

§ Recipes

- German apple pancakes might become a new Sunday morning staple this fall.

- Chicken cacciatore was good! I’m excited to try the mushroom variant next.

-

§ I have been thinking about planting a fruit tree next year. Maybe a pear? They have both a higher ceiling and a lower floor than apples. Plus, good pears are next to impossible to find in stores.

§ For the past two weeks I’ve been taking the back roads to work. No highways at all. Sure, it takes a few minutes longer than the highway-centric route that I’ve been accustomed to but it is 100% worth it.

The commute is significantly less stressful and I get the opportunity to see parts of my city that I would never otherwise see. I’ve already visited two new businesses and I have a growing list of small shops and restaurants that I can’t wait to check out.

§ I am happy to report that the raspberry bush that I planted at the end of June is doing well! In fact, it looks like I will have a second harvest of fruit soon.

§ I think I’m going to trade in my iPad Pro to Apple for a pair of the newly revised AirPods Pro 2.

I haven’t used my iPad since getting a reMarkable tablet a few months ago. I promised that I would sell it if this were the case and, with Apple expected to release a redesigned model next year, the clock is ticking.

I’ve had the original AirPods Pro for years and use them heavily. I kind of wish I could view statistics detailing how many hours they are active each day but, at the same time, I don’t think I really want to know the answer. I certainly use them more than any other electronic device in my day-to-day life.

John Gruber’s recent quip that “AirPods Pro 2 are as much better than the original AirPods Pro than the original AirPods Pro seemed from the original non-pro AirPods” may have finally tipped the scales for me in favor of upgrading.

§ I got five free tickets to Cedar Point that I will have to use before the end of October.

§ Hey! That Shopbot bed extension I ordered a while back finally arrived. I can now cut things up to 48x48”.

§ I watched Talk to Me. It started off as one of the better horror movies in recent years. The characters are compelling, the premise is original, the effects are impressive while remaining low-key. Somewhere around the half-way mark things started to fall apart though. It never became actively bad. It more felt like the writers didn’t flesh out a cohesive ending in advance and instead just kind of winged it as they went along.

Afterwards, Fionna and Cake stole the spotlight. So far, I am enjoying it more than Adventure Time.

§ I visited the Lake Metropark corn maze. It 1) was a blast and 2) checked an item off my fall bucket list.

§ Links

§ Recipes

- I tried to make mochi. While perhaps not a disaster, was certainly not successful by any means.

-

Getty Images is partnering with Nvidia to launch Generative AI by Getty Images, a new tool that lets people create images using Getty’s library of licensed photos.

Generative AI by Getty Images (yes, it’s an unwieldy name) is trained only on the vast Getty Images library, including premium content, giving users full copyright indemnification. This means anyone using the tool and publishing the image it created commercially will be legally protected, promises Getty.

I last wrote about Getty back in February when they filed a lawsuit against Stability AI at the same time their largest competitor, Shutterstock, announced their own image generation service. I was in favor of their strategy at the time. Generative AI presented a clear opportunity for differentiation. It seemed as though they were positioning themselves to be stringent supporters of human-made art:

My knee-jerk reaction is to say that Getty is behind the times here but, after thinking about this a little bit more, I am less sure about that.

If Shutterstock starts re-licensing AI generated images, why would you pay for them instead paying of OpenAI or Midjourney directly? More to the point, why not use Stable Diffusion to generate images, for free, on your own computer?

Getty Images, on the other hand, gets to be the anti-AI company selling certified human-made images. I can see that being a valuable niche for some time to come.

Do you just need an obligatory feature image to slap on top of your SEO-bait blog post? Go to Shutterstock or DALL-E or any of the hundreds of fly-by-night AI image generation services. If you want to Support Human Artists, however, Getty is the only place to go.

With today’s announcement Getty has abandoned their opportunity for differentiation. I’ll be interested to see who steps up to fill that role.

-

§ It is now autumn. Welcome to my autumnal blog.

Inspired by Matt Kirkland and the fact that a perk of my job is that I can now visit a ridiculous number of local parks and museums for free, I’ve put together a bucket list of places I would like to visit before winter:

- The Botanical Gardens

- Holden Arboretum

- Shaker Lakes Nature Center

- Penitentiary Glen

- Lake Metroparks Farmpark

- The Fairport Harbor Lighthouse

- The Natural History Museum

§ I’ve been listening back to some old Vergecast episodes and landed on one where they discussed the big Reddit blow up as it was ongoing. Looking back on it all now, it’s clear that Reddit unequivocally won.

If you were to have asked me at the time, I would have predicted that either Reddit would make major concessions to developers or a serious platform competitor would emerge. Neither of those things happened. I don’t love this outcome but I think it has something to teach us about platform stickiness.

§ The state of third-party calendar applications is dire. Apple’s default calendar app is good for casual use but as soon as you need any additional features, settings, or customization the only apparent alternative available is Fantastical.

Probably most people just use Google Calendar or Microsoft Outlook? Those aren’t what I am looking for either, though. I would love to see a new entrant into this space. No need for buzzy AI features. Just a solid, well designed, service agnostic calendar app.

§ Stable Audio from StabilityAI is impressive! A while ago, I found Riffusion which uses Stable Diffusion to generate images of audio spectrograms. Stable Audio, in contrast, uses a more traditional technique.

We’ve come a long way. Generated songs no longer sound like they are being played over an underwater gramophone. Still, anything with vocals is weird and inhuman but that is at least half the fun!

§ I saw my first spotted lanternfly this week. So far we’ve been able to largely avoid them in northeast Ohio. I’m worried that might not last too much longer.

§ I bought two pairs of pants a couple of months ago that I still haven’t worn because I didn’t realize until it was too late that they didn’t have a coin pocket.

I have been carrying AirPods in that strange little fifth pocket every day since 2017. It has become an integral feature of my wardrobe in a way that I had not consciously appreciated until it wasn’t there.

§ After the grave state of affairs last week, I am rewatching Utopia (2013). The writing is smart, the cinematography is excellent, and it has the best soundtrack since Twin Peaks, composed by Cristóbal Tapia de Veer of White Lotus fame.

§ I used Blender to animate a 3D model for the first time since college. Blender is still wacky and unintuitive but nevertheless I able to get everything working pretty quickly. Sometimes a ten second animation can communicate an idea more effectively than any amount of drawing and writing ever could.

§ I saw Alice Longyu Gao live on Saturday. The day before the concert her tour car and was stolen along with all of her equipment although you never would’ve known watching her show. It was great!

§ Links

-

Jennifer Pattison Tuohy, The Verge:

At its fall hardware event Wednesday, [Amazon] revealed an all-new Alexa voice assistant powered by its new Alexa large language model. According to Dave Limp, Amazon’s current SVP of devices and services, this new Alexa can understand conversational phrases and respond appropriately, interpret context more effectively, and complete multiple requests from one command.

I wrote about how bewildering I found it that no major company had integrated large language model technology into their voice assistants back in January. January!

It’s the APIs that are key, says Limp. “We’ve funneled a large number of smart home APIs, 200-plus, into our LLM.” This data, combined with Alexa’s knowledge of which devices are in your home and what room you’re in based on the Echo speaker you’re talking to, will give Alexa the context needed to more proactively and seamlessly manage your smart home.

The big difference between conversational AIs like ChatGPT and traditional voice assistants is that the later has to interact with the outside world. If the new Alexa can’t turn on my lights and set timers that will be a regression. This sounds like it will use a ReAct pattern, which is a smart approach, but only time will tell how solid it actually is.

Ultimately, I think the reason that more companies haven’t yet introduced LLM-backed assistants is that they are an expensive replacement for a technology end-users have traditionally gotten for free. Amazon seems unsure about how they will handle that.

Limp said that while Alexa, as it is today, will remain free, “the idea of a superhuman assistant that can supercharge your smart home, and more, work complex tasks on your behalf, could provide enough utility that we will end up charging something for it down the road.”

Ironically, the two uses of the word “super” in the previous quote don’t inspire much confidence.

-

Architecture is a public art, a vernacular art, and a background art: it is created by a huge range of people, and experienced involuntarily by an even wider one. This means that we need architectural styles that are as accessible as possible, to the full range of people who live with what we build, and to the full range of builders who create it.

We enjoy creating things we can inhabit. We build blanket forts as soon as we can crawl. Later, we graduate to making treehouses and stick tepees in the summer and igloos in the winter. Failing that, we build things we imagine we could inhabit: Lego towers, sand castles, doll houses, vast Minecraft fortresses…

Once we reach adulthood, architecture—building structures—becomes inaccessible to all but the select few that have chosen it as a profession.

I’ve spent this summer building a small greenhouse in my backyard. It has been immensely satisfying to simply open its door and watch the way sunlight plays over its interior. To cheer it on as it withstands heavy wind gusts. To shelter under its roof during rainstorms. To know how to repair it because I put in all of the screws to begin with.

But my little greenhouse is also totally prohibited! I didn’t obtain a permit from my city before I started construction. At any time my local building department could mandate that I take it all apart and then fine me for the trouble. Of course, building codes and permitting serves a valuable function. Nonetheless, we need an outlet for amateur architecture because the desire to build doesn’t die after childhood.

-

§ My reMarkable tablet is seeing less use each week. I am now back to primarily using a physical notebook. I went with a nice large A4 spiral bound notebook which I have been using in “landscape mode.”

I appreciate being able to physically collage elements together. Paint samples and printed Fusion 360 renders can live inline with my notes. I can draw on top of them, highlight sections, and cross things out.

Paper preserves history. The digital note taking dream is that everything is infinitely flexible—you can move, erase, replace, and rewrite losslessly—but maybe that is a detriment to creativity. When looking back at old digital notes, only the final result is visible. The iterative process leading up to it is lost.

§ Apple’s “Wonderlust” event was on Tuesday. Some brief thoughts:

- Apple Watch Series 9 recognizes a new “double-tap” gesture which is almost identical to the “select” gesture on the upcoming Vision Pro headset. I find this fascinating—the iPod click wheel, two finger scrolling on the mac, slide to unlock, pinch to zoom—Apple has a long history of introducing ubiquitous gestures. “Double-tap” is the first new multi-platform gesture in a long while and the first one that isn’t mediated by a touchpad or screen.

- USB-C is long overdue. I am super excited it is finally on the iPhone.

- Titanium seems great but the new colors couldn’t be more boring.

- The new action button on the iPhone Pro models is alluring and will probably be the single largest factor temping me to upgrade.

- The Pro models will be able to capture 3D Spatial Videos for the Vision Pro. Very cool but a lot was left unsaid. What is it like to view these videos on the iPhone itself? Will it be able to capture Spatial Photos too? How much depth data can you actually capture from two lens' so close together? I wouldn’t be surprised if next year’s iPhone 16 features a new camera arrangement for more robust spatial image capture.

§ My Keurig has been dying a slow death. I hit a breaking point this week when I realized it just really isn’t the right tool for the role it serves.

Most of my single-serving caffeine consumption comes from my espresso maker. The Keurig’s primary job is to fill up two travel thermoses each morning, a task that requires four K-Cup pods to accomplish. As I was waiting for one of those K-Cups to sluggishly brew, it finally occurred to me that brewing a traditional pot of coffee would be easier, faster, cheaper, and far less wasteful.

After spending an afternoon reading up on reviews I ultimately decided to get Oxo’s 8-cup brewer.

The coffee it makes is actually good.

Previously, I often wouldn’t finish my thermos full of Keurig coffee. It served more of a utilitarian role. The coffee it produced wasn’t bad, by any means, but it absolutely wasn’t good. By comparison, the coffee that the Oxo makes is cleaner, smoother, and is far less acidic. It is actually good! I’ve been finishing my coffee on my drive to work and then wishing I had more!

On Monday, I got a call from the dealership. I assumed it was to tell me my car was ready for pick up.

Nope.

They successfully replaced the BECM, the reason my car was in the service center to begin with. During reassembly, however, the technician broke a dozen of the bolts that attach the battery assembly to the underside of the vehicle.

The technician was calling to tell me that, for not entirely convincing, warranty-related reasons, they will need to order replacement bolts directly from some central General Motors parts center, which could take a while. At least they replaced the blown tire on the loaner car.

I was able to pick up my car, finally, on Friday. Wow! After almost two weeks of driving a big gasoline-powered SUV my tiny plug-in hybrid feels unbelievably nimble. It is good to be back.

§ I desperately need some good TV show suggestions. I’m wallowing in the depths of Hulu right now and it is getting bleak. Seriously, send me an email.

§ I am now officially a source behind some hard hitting investigative journalism.

§ Links

- Same.energy and River

- An interview with Coolmath Games. “I’m, like, in the scene now. I have a lot of connects who do other social media stuff. I’m friends with Axe Body Spray and C4 Energy and like, TRUFF Sauce, Heelys—”

- A literary history of fake texts in Apple’s marketing materials

-

§ I saw the Cleveland National Airshow for the first time in… 15 years? I really don’t consider myself to be an airplane guy but let me tell you: aerobatics is exhilarating. It just is.

§ I met with a group of engineering students who I will be advising on a large interactive project. Something along the lines of Daniel Rozin and Peter Vogel. It is all in the very early stages but I can’t wait to see what they end up creating!

§ Joining some photo sharing sites, years after leaving Instagram, has been a surprisingly fulfilling experience.

I capture a lot of images—and I am proud of some of them—but they get lost in a monolithic photo library filled with momentarily useful screenshots and pictures of plants for use with Apple’s Visual Lookup feature, which I employ constantly.

I’ve tried creating a separate iCloud photo album for my favorite shots but that never seems to stick. The social aspect of sites like Flickr and Glass, while not particularly important to me in its own right, has a knock-on effect of making me more mindful in my curation and editing process.

§ The great thing about running this website is that I can use it as a hub for everything that I post online—as they say, “publish on your own site, syndicate elsewhere.”

In that vein, I set up a new photography page on this blog. It is its own little thing so you won’t see new photos pop up in the main feed. You will have to subscribe to its dedicated RSS feed if you want to stay up to date as I post new stuff.

§ After reading all of the above, it shouldn’t be a surprise that I brought out “the big camera” for the first time in a few months. The picture quality is unquestionably better than my iPhone but it is also distinctly less convenient.

A standalone camera that automatically syncs with Apple Photos would go a long way towards alleviating that inconvenience.

Not too long ago Apple began offering third-party device manufacturers access to their Find My network so this idea isn’t totally outside the realm of possibility.

§ Huh, my car trouble last week was because of a BECM issue after all. I am very thankful I was able to get this all sorted out before my warranty expires.

Finally, to put a bow on this whole saga, I got a flat tire while driving down the highway in the loaner car. At least I still know how to change a tire despite not having done it since high school.

The dealership promises that my car will be ready for pickup on Monday.

I miss living somewhere with functional public transportation.

§ Links

- Manuel Moreale’s new People and Blogs interview series is delightful. So far, he has interviewed Manton Reece and Rachel J. Kwon.

- A list of browser-based single use tools

- A living web

- Gossip’s web

§ Recipes

- Tumeric rice

- Fair warning: this website is really rough. It crashes Safari on my phone unless I use an ad blocker. The recipe is great, though!

-

§ We have secured a wedding date: July 13, 2024.

§ It’s Labor Day weekend which means it is time for the county fair.

✓ Delicious milkshakes

✓ Adorable baby goats

✗ Pigs in tiny cages with names like “Porkchop”

§ The neighborhood park recently got pickleball courts installed. As someone who

- enjoys playing badminton and

- has never been remotely interested in tennis

I am curious to see where pickleball falls between the two. One of these days I’ll purchase the equipment necessary to find out.

§ I watched The Peripheral after seeing how angry John Gruber was when he learned Amazon had canceled the show’s second season.

Set in the year 2032, the restrained near-future speculative technology is the most interesting part. The glut of guns and violence would have benefited from a similarly disciplined approach.

§ Jury Duty was exceptional. I am sure all of the praise it has received will make it temping to create another season but I think that would be a mistake.

One of the actor-jurors, Edy Monica, also directed and starred in the short film Nicole which is excellent in a sort of Ryan Trecartin type of way.

§ On Friday morning my Chevrolet Volt suddenly stopped working. The battery indicated that was fully charged yet displayed zero miles of available range. It was extremely loud and there was a concerning sluggish quality to each acceleration and deceleration.

After I coaxed the car back home I tried restarting it and, suddenly, everything went back to normal? Bizarre.

It could be a BECM issue? If so, it is comforting to know that BECM issues can occasionally cause a sudden, random loss of propulsion altogether. I have 5,000 miles remaining on my 100,000 mile warranty so good timing, at least?

After calling—eleven—local dealerships I was able to find one with a loaner vehicle available—a 2018 Equinox.

The shop won’t be able to look at my car until next week though. To be continued.

§ I set up a Flickr account. No guarantees I’ll stick with it but it is great to see that it’s still going strong after all of these years.

I also started a Glass account which is the new hotness. It has much more of an Instagramy social focus which is a bit of a turn off but the interface is totally fun and modern.

§ Links

- A collection of anthropomorphic objects

- Singapore in color

- The great dissolve

- TextFX

- Matt Webb’s PartyKit sketchbook

§ Recipes

- Fresh mint ice cream

- I zested individual chocolate chips with a microplane before learning the correct method to add flaky chocolate shavings to ice cream. This wasn’t quite as tedious as it sounds but it was close.

-

Matt Webb continues to write the internet’s most thought provoking meditations on AI:

If we are going to have AIs living inside our apps in the future, apps will need to offer a realtime NPC API for AIs to join and collaborate […]

You create a “pool” or a cursor park or (as I call it) an embassy on the whiteboard. The NPCs need somewhere to hang out when they’re idle. […]

NPCs can be proactive! The painter dolphin likes to colour in stars. When you draw a star, the painter cursor ventures out of the embassy and comes and hovers nearby… “oh I can help” it says. It’s ignorable (unlike a notification), so you can ignore it or you can accept its assistance. At which point it colours the star pink for you, then goes back to base till next time. […]

Cursor distance = confidence. When an NPC wants to be proactive, it can hover nearby. It can be pushy when it knows it can help. (It can remember not to pipe up again if it is banished.) There’s a lot of resolution to explore here.

Visual interfaces need a ‘suggestion language’ which is as good as ghosted text is for autocomplete.

Chat is a language model’s terminal interface—a critical affordance when low level input is required but a poor choice when discovery and intuitive ease of use is a priority.

-

§ I visited “the smelliest food festival in America” and purchased a handful of different garlic varieties that I’ll try planting in the fall.

I made a revised prototype of my Technic / Tinkertoy inspired modular architecture building blocks this time using strut channel nuts embedded between two layers of 3/4” plywood.

I also placed an order for a Shopbot component that will increase my cut area from 2x4’ to 4x4’. I can’t wait.

§ I watched From which has big Haven vibes.

One of the primary differences is that Haven has more of a “monster of the week” structure which is to its benefit. The show-runners are able to take their time to draw out a compelling central plot while each minor story arc provides a drum beat that keeps things interesting on a week-to-week basis. Watching From, I found myself feeling frustrated by extended character-building storylines, looking instead for resolution on the show’s central mystery.

Ultimately, I recommend watching From if you enjoy mysterious location-focused shows like Lost, The Leftovers, and Haven. From might not be quite up there with the best of them but it is nevertheless a solid addition to the genre.

§ I finally had to fill my car up with gas for the first time in nearly two months. During the time that elapsed I used 7.27 gallons of gas and drove 2,190 miles for a total of 301 MPG. Not bad at all!

I realize that I am way off from my initial goal of “no more gasoline before Thanksgiving.” I am sure that if I were to only use my car for my regular commute to and from work I could have accomplished that goal—a majority of my gas usage came from out of the ordinary trips, drives out into the country, a trek across the state, etc.—that just isn’t particularly feasible though. A lot of the fun stuff here requires a bit of a drive! I’ll be totally satisfied if I can maintain an average around 300 MPG.

§ I guess there was a tornado in Cleveland Thursday night? I slept through it.

Possibly related: I’ve definitely caught a cold.

§ Links

-

§ We harvested five pounds of produce from the garden this week, nearly all of which were tomatoes. The san marzano, while still suffering from blossom end rot problems, is our heaviest producer by far.

§ My barber casually referred to my collection of random interests—building a greenhouse, growing and preserving food, making bricks, etc.—as “prepper stuff.” My reaction was to distance myself from the characterization but, thinking back on it now, I don’t know if I can pinpoint exactly what exactly I felt was so wrong about it.

I guess it is the popular association of peppers with end-of-the-world disaster scenarios, weapon stockpiling, and distrustful “every man for themselves” worldviews. Self-sufficiency is great, it is the underlying motivation that can cause problems.

I am fascinated by how things work. Bread making isn’t some innate ever-present skill. It is an arduous process that had to be invented, iterated, perfected, and passed down through generations. I am not going to grow wheat and then harvest, thresh, winnow, and mill grain every time I get a craving for fresh bread. Understanding the process, however, gives me a unique appreciation that I do not think I would otherwise have.

§ I have been enjoying Dark Noise, a white noise “sound machine” application.

The killer feature is the ability to create custom sound mixes. So far, I have make myself two primary mixes: a sleep mix and a mix to play during the day as I work. My sleep mix includes sounds like “rain on tent,” “windy trees,” and “crickets” while my daytime mix has “birds,” “wind chimes,” and “creek.”

As someone who likes to have audio playing in the background throughout most of my day, I am surprised I hadn’t given Dark Noise a shot until now.

§ I’ve only recently learned about the pawpaw tree which grows “the largest edible fruit indigenous to the United States.” It is native to Ohio and its fruit is said to taste similar to a banana or mango.

They ripen only during a short window of time in late September and early October. I’ll need to try to track some down then. If it tastes good I may try to grow one next to my maypop, another surprisingly tropical midwestern fruit.

§ I got a long-neglected Shopbot CNC machine up and running at work.

The cut area is only 2x4’ which is extremely limiting compared to the 4x8’ machine I’ve used in the past. Regardless, there is something deeply satisfying about drawing something on a computer and then watching as a robot cuts it out for you in real time.

§ Links

§ Recipes

- Tortilla Española

- This was pretty good but not quite as flavorful as I expected. It’s missing something but I can’t put my finger on it.

- Braised chicken with coconut milk, tomato and ginger

- It can’t compete with my other favorite braised chicken recipe but this was still delicious.

- Tortilla Española

-

§ The soil in my yard is something like 80% clay, 20% large rocks. The process of creating my hügelkultur left me with plenty of each.

The rocks were easy. I was able to add them to the dry stacked walls that define my garden beds.

The clay is the hard part. In the past I’ve collected it, along with all of my other unamended soil, in an unceremonious pile in a back corner of my yard.

I’ve always been interested in learning how to extract and purify the clay for pottery making, though, which is exactly what I tried this week.

It wasn’t as difficult as I anticipated it would be and the result was a large ball of clay that was, to my eyes, nearly indistinguishable from professional earthenware.

The plan now is to process the clay into bricks then use those bricks to make a small kiln.

§ I took Friday off to look at wedding venues. We have a date or, at least, an array of possible dates orbiting mid July of next year.

§ I didn’t know it was possible for blossom end rot to manifest inside of tomatoes. Like, the outside looks totally fine and then, once sliced, you’ll notice that the seeds are covered in a black goo.

I noticed this happening with my San Marzano tomatoes. After throwing out at least a dozen fruits with obvious exterior end rot, I finally picked a few nice looking ripe fruits but then only noticed the gross center after slicing them.

This is mostly annoying because I originally intended to can these tomatoes whole which I now won’t feel confident doing until I can resolve this issue.

§ I’ve been playing the New York Times Mini Crossword. Full-sized crosswords have always felt like too large of a commitment. Their bite-sized counterparts fill a nice sub-ten-minute niche right next to Connections.

§ I’ve been evaluating Lego’s new(ish) Spike robotics platform. I am inherently suspicious of proprietary systems like these although, after a couple of hours fiddling, I am beginning to warm up to this set. There is enough modularity and flexibility built in that I don’t feel pigeon-holed into particular pre-defined projects.

§ Links

- 54 years later, the world is finally ready for Turn-on

- A very long piano

- Oscilloscope Music

- The uncoloring book

-

§ Fresh peaches might be the best fruit. The key is to pick them ripe which makes shipping them next to impossible. This means you will need to find them locally. Now is the time to find for your local orchard. I promise it will be worth it.

§ I harvested all of the garlic that I planted in early May. I now realize that spring was the wrong time of year to plant garlic but, nevertheless, I am happy with my eight small, though well developed, bulbs.

§ I’ve embarked on a huge deep dive on modular architecture this week starting with Lego bricks and culminating in a prototype that aims to answer the question: “Can you build a house using enormous, wooden, Technic-style, 2x4s?”

§ Cabel Sasser wrote a fascinating post about the challenges that arise when designing public artworks.

This has been one of the most unexpectedly interesting facets of my job designing exhibits for an interactive museum.

some designers are amazing at imagining things, but not as amazing at imagining them surrounded by the universe. […]

It almost seems like there’s a real job here for the right type of person. “Real World Engineer”? Unfortunately, the closest thing most companies currently have is “lawyer”.

The challenge—and the fun—comes from the reality that the public will always interact with your work the way they want to. Your job is to guide their experience through thoughtful design, without the luxury of direct instruction.

§ Infinity Pool was wild. With Alexander Skarsgård, Mia Goth, and Brandon Cronenberg you just know you’re in for quite a trip.

Brandon Cronenberg’s previous film, Possessor, had similarly striking visuals but ultimately didn’t stick with me as much as I expect this film will.

§ One of our quails was attacked despite being in a coop that we reinforced with two layers of 1/2” hardware cloth. It was all very upsetting. For now we’ve moved them into the greenhouse. While it isn’t ideal, in terms of heat, as far as I can tell it is basically avian Fort Knox.

§ Links

-

Google plans to overhaul its Assistant to focus on using generative AI technologies similar to those that power ChatGPT and its own Bard chatbot, according to an internal e-mail sent to employees Monday […]

As part of the move, Google is reorganizing the teams that work on Assistant… The move will involve eliminating dozens of jobs, Axios is told, out of the thousands of employees who work on the Assistant

Google Assistant isn’t as embarrassing as some of its competitors. Still, it was shocking to read that “thousands of employees” work on Assistant.

I’ve long bemoaned the fact that Google, Apple, and Amazon haven’t incorporated generative AI into their legacy “AI assistants.” Microsoft replaced Cortana with Bing AI a couple of months ago but I am not sure anyone ever used Cortana to begin with.

This is clearly a step in the right direction from Google. Apple and Amazon appear to be on a similar path themselves. It will be interesting to look back at the state of the assistants this time next year.

-

§ Our first red cherry tomatoes have ripened. They are a few weeks behind our crop from last year but no less delicious. We’re still waiting on the yellow and black cherries.

I also ate my first home grown summer squash of the season. The plant has been uncharacteristically prolific but, for whatever reason, many of its smaller fruits are suffering from blossom end rot. I tried supplementing with calcium and we will see if that helps.

§ OpenAI took its AI detection tool offline due to low accuracy. This isn’t surprising—I’ve seen a number of stories about professors rejecting student essays after they were inaccurately flagged as being AI written.

I suspect OpenAI primarily developed its AI detection capabilities for internal use, to avoid “model collapse” by filtering today’s AI generated content out of future training runs. When used this way, an overzealous classifier is totally fine. Sure, you might filter out some genuine content but that isn’t a huge deal. When the same classifier is used as the sole means to judge the authenticity of student work, however, false positives start to become a lot more impactful.

§ We got a new stove! It is a lot like our old stove except, in this one, the oven actually functions properly. The burners supposedly have a higher BTU rating too but that hasn’t had any noticeable impact, in practice.

§ I started digging a hügelkultur which I have been mistakingly calling a hinterkaifeck.

You might recall that I have been spending the summer sawing down a lot of tree branches and overgrown bushes.

The volume of branches this has produced has been challenging. Sure, making a wattle fence has been great fun but that only uses so much wood. I’ve started to annoy my city’s garbage collection service with the bags upon bags of sticks and leaves I’ve been attempting to throw out each week.

So, hey, maybe a hügelkultur would be perfect. You just bury some wood in the ground, plant things on top, and, as the wood breaks down, it feeds the growing plants above with a continuous supply of carbon.

§ Links

§ Recipes

I tried making cheese with raw—scratch that, “pet”—milk that I purchased from a local Amish grocer.

I spent a while trying to decide between a queso fresco and a farmer’s cheese before I realized that they are essentially the same thing. Mozzarella felt a bit too ambitious as an entry point.

The whole process was not as difficult as I expected. Warm up the milk, add acid, wait, scoop out the curds, drain. That is really it.

I also made ricotta with the leftover whey. I still have so much more whey left to use, though. I’ve read that you can use it in place of water in bread doughs. It is also supposed to have some unique qualities when used to soak beans. Some people make whey lemonade.

This whole experience, while fun, makes me disinclined to ever want a dairy cow. At more than seven gallons of milk per day, I would need to get way more into dairy before that ever became anything more than burdensome.

A goat on the other hand…

-

§ Each of our pepper varieties—jalapeño, cayenne, poblano, banana, and Hungarian—are all growing fruit.

Thanks to some early pruning help from the local deer population, two of our cherry tomato plants are still a manageable size. The San Marzano, however, is as vigorous as ever.

I don’t even know what to say about the blackberry. It has already totally outgrown the patch I planted it in last year. I am both proud and overwhelmed.

Our purple passionflower, which I had thought hadn’t survived the winter, has come back with a vengeance. There are shoots popping up as far as four feet away from the original plant. Researching more now, I see that it is considered invasive in some areas.

Humid weather earlier in the week gave way to a violent, cathartic, storm Thursday night complete with thunder, lightning, and hail. Relentless sheets of rain flattened our young, top heavy plants.

By the next evening, just about everything was able to perk itself back up. The only casualty was a large, fruit bearing stem of one of the cherry tomato plants.

§ I rode an e-bike for the first time. As an exercise device it was, of course, less effective than a traditional bicycle. As a means of transportation, however, it might be unbeatable.

The experience considerably expanded what I can see as a viable car-free commute. Unfortunately, my current commute would be upwards of an hour each way. Even on an e-bike that is not exactly viable.

§ After some struggle, I’ve finally made a breakthrough on my capacitive touch wall mural music sampler project. The turning point came when I decided to stop using MIDI altogether and instead use the Touch Board as a basic USB keyboard.

This was all made possible by finally finding an application I had been searching for this whole time—a simple, keyboard focused, sampler app.

The next step for the project is to start prototyping its physical design.

§ I’ve been playing Connections, the latest Wordle-esque puzzle game from the New York Times. The goal is to categorize a four-by-four grid of words into four separate groups based on their commonalities. Sometimes the solutions are straightforward—flute, clarinet, harp, oboe, all musical instruments—but often there are a few words included that make things a little more ambiguous. Each game takes less than five minutes to complete and it isn’t ever difficult enough to be frustrating but never so easy that it feels mindless—a tricky balance to strike for a game of this kind.

§ The Queen’s Gambit was captivating and further evidence for my theory that limited-run series’ are always better than their indefinite counterparts.

§ Links

subscribe via RSS